Ever wish there was an easy way to add rich animation to your Flutter UIs? I did, so when we started working on the Wonderous app, it seemed like the perfect excuse to build a best of breed open-source animation package.

Ever wish there was an easy way to add rich animation to your Flutter UIs? I did, so when we started working on the Wonderous app, it seemed like the perfect excuse to build a best of breed open-source animation package.

Warning: There is a GIF at the bottom of the post with flashing images.

Like many of you, I was inspired by the impressive visuals of Spider-Man: Into the Spider-Verse (which, by the way, has a website built using gskinner’s CreateJS libraries) and wanted to try to apply some of that style into one of my animations.

On top of that, I’ve had the idea of making a walk cycle with an astronaut for a while and decided it was time to make it.

Continue reading →

You know that sense of awe when you see inspiring work? The kind of feeling that makes you say “wow, I wish I could do that.” Seeing Ash Thorp, GMUNK and Joey Camacho’s CG work makes me feel that all the time. There’s so much thought that goes into their compositions and I wanted to see if I could emulate some of that using Blender. The following is a summary of what I’ve learned through experimenting with CG. You can also see the final results of what I made here. I hope this encourages you to have fun making your own crazy creations after reading!

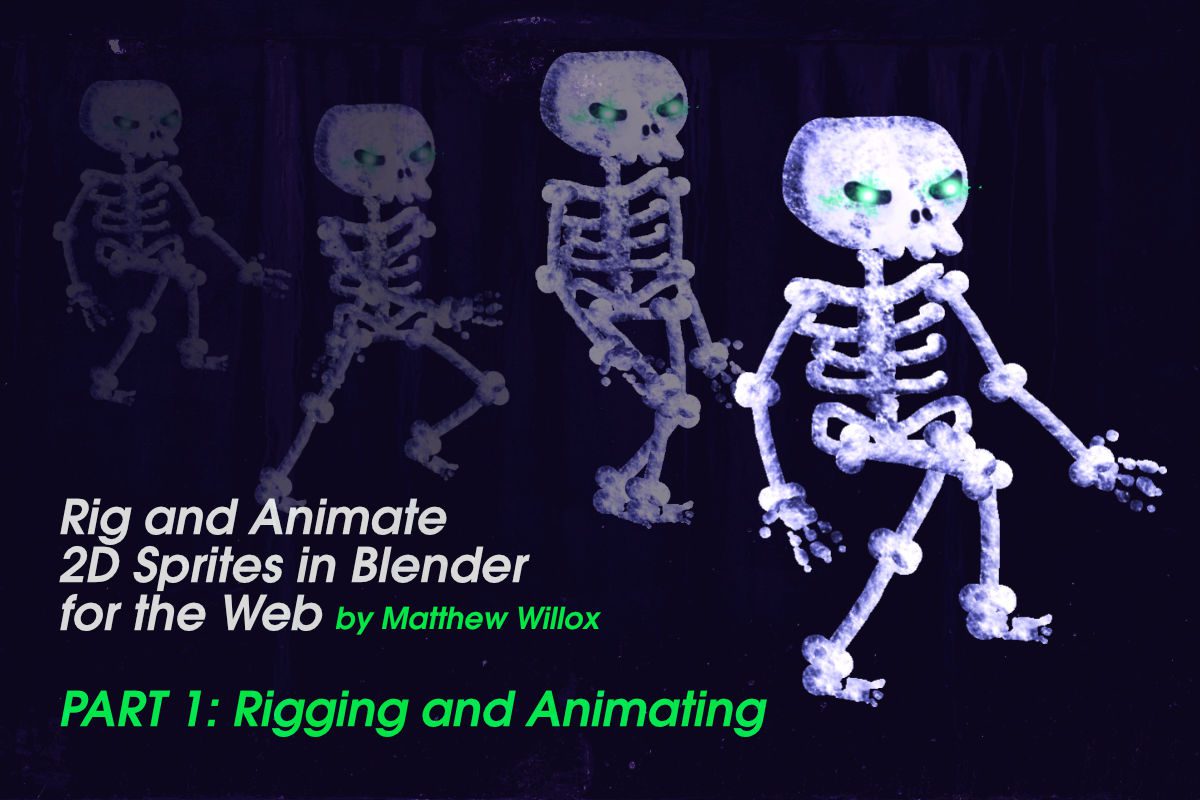

This is a two part tutorial that explains how to rig and animate 2D sprites in Blender and export them for use on the internet.

In 2014, we worked with BioWare to create the ISS: an interactive, animated cinematic of a player’s history in the first two games of the Dragon Age series, narrated by one of the characters, Varric.

When we first met with BioWare’s online team to discuss the Interactive Story Summary, we were floored. It’s always a privilege to work with one of the best game development companies in the world, and the ISS presented a challenge that was perfectly suited with some of the tech we have been focused on for the last few years. The goal of the ISS is to summarize the complex narrative and decisions that players have made in previous games in the series, and give them control of those choices leading into their latest chapter, Dragon Age: Inquisition.

We recently spent some time playing with a new feature in Chrome called Web Audio Input, which provides access to a microphone or other audio input.

The result was “Orcastra” (ha!) which allows users to select one of five deep sea characters who lip sync along to the user’s audio input.

Creating Orcastra was quite straightforward. The characters were all created in the Flash CS6 IDE (MovieClips with timeline animations) and exported to HTML5 using Toolkit for CreateJS. We used PreloadJS to load all our assets, TweenJS for simple tweens, and EaselJS as the display layer.

We use feature detection to ensure the browser supports audio input, prompt users to allow access to their microphone, then listen for volume changes (with maximum mic gain) to confirm audio input before showing the character selection screen. Once the user picks a character, it’s just a simple matter of syncing the CreateJS animations with the current input volume level.

Orcastra should work with any Web Audio Input enabled browser. This currently includes the latest version of Chrome for OSX, and Chrome Canary for Windows, but you’ll need to enable the feature with chrome://flags and restart your browser before trying it.