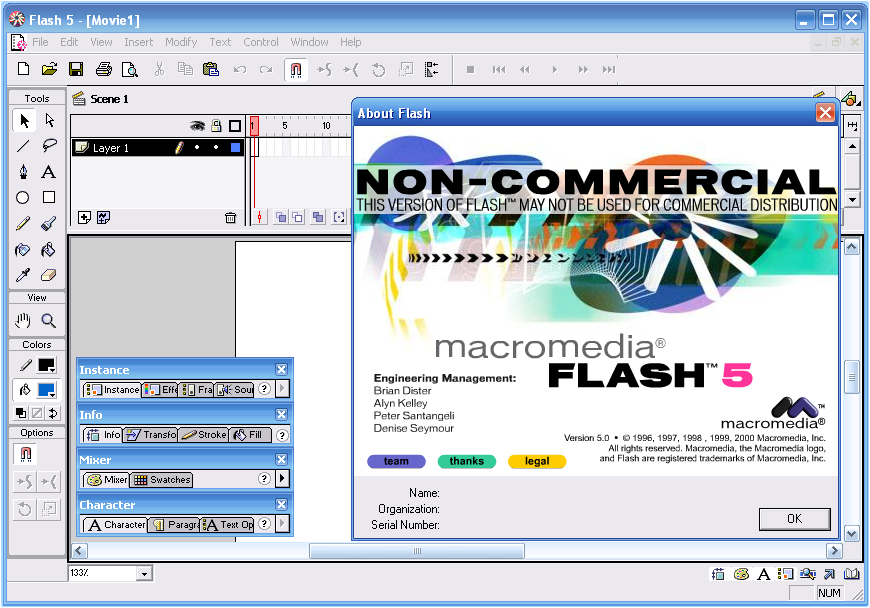

Back in the good ol’ days, Flash was very popular among many for playing media. It was used, online and offline, for displaying animations, showing presentations, and general advertising. Though there weren’t all that many options back then, such popularity still came mostly because it was simple. It was able to animate vector art very smoothly as opposed to large, clunky .gifs, as well as allow users to use simple interactions like mouse clicks and keyboard input. Macromedia Flash itself also had a very simple interface and UI, and was used in schools all over to help teach students multimedia and animation.

As the internet evolved, so did Flash. As video playback started to become popular, Flash had no choice but to adapt to keep up with competition and provide its users with the best experience possible. This involved cutting corners, taking back-doors, and even adding a whole new programming language to the mix at one point.

Despite their best attempts, it wasn’t always simple.

History

Share if you remember this screen!

Flash animations were considerably small, which was good because connection speeds at the time were very slow. Downloading full-sized .avi and .wmv videos could take hours and eat all the allowed data usage for the day. Nowadays however, connection speeds have increased, videos have become smaller in file-size thanks to .mp4 and .f4v formats, and… simply put: Youtube. Because using a video camera instead of manually tweaking frames was much easier, fully colored real-life videos became more and more popular online over Flash animations.

To keep up with this trend, Adobe also gave Flash the means to play video files as well in Macromedia Flash MX (6) in 2002. In order to keep the player itself small, Flash loaded videos directly from file or url using a NetConnection, and then parsed them in real-time using a NetStream object. In code, all one had to do was load the video through NetConnection and NetStream within Actionscript, and then display the video on the screen.

var nc:NetConnection = new NetConnection();

nc.connect(null);

var ns:NetStream = new NetStream(nc);

ns.play("http://www.mySite.com/myVideo.mp4");

Simple, as always. So much so that even Youtube used Flash to play many of its videos for a while.

Could never permanently solve this one on Chrome…

However, technology has its limits. Videos kept on getting longer and larger as higher qualities and bigger screens went into demand. Online, Flash could not access the GPU, so it was instead reliant on the CPU to play back video. Although doing this worked for a while, it was still very limited and gave way to many performance problems.

Imagine making a company’s secretary, Ms. CPU, program a client’s app instead of the specialized developer in the other room, Mr. GPU. She can do it herself, of course, but she’s got a lot of other things on her plate as well (data organization, loading files, phone calls), and we can’t let clients use Mr. GPU because he’s NDA and is quite prone to letting out company secrets.

Artist’s depiction of what the CPU looks like in the digital realm.

Given a very large video, performance became a huge issue in Flash. Especially when one thinks to use Flash to do other things with the video like adding video effects, additions to the screen like player UI, or joke material. Why else use Flash for playing back video instead of a generic player if not for that?

Boing boing!

And who handles all that extra work? Ms. CPU.

Fortunately, Adobe didn’t just say “tough luck” and sit around doing nothing. They got to work, and they committed a lot into making video playback as smooth as possible. Here’s a runthrough of the many different methods Flash used to playback video.

Video Object

The simplest answer to playing video was… actually, IS the Video object. It’s an extension of a DisplayObject, which is simply added to the stage like any other sprite. Simply give the Video the playing NetStream, set, and forget. The video playback itself is controlled by the NetStream, so there’s nothing else to it.

var video:Video = new Video(); stage.addchild(video); video.attachNetStream(ns);

Since the Video object is a sprite, it could be messed with. Moved around the screen, rotated, scaled, even tweened to bounce back and forth around the screen.

A player for when you’re lying in bed without a pillow.

Adobe put a lot of optimizations into the Video object, but it was still fully reliant on poor Ms. CPU. When it also had to deal with being tossed and turned around, the lag multiplied.

StageVideo

Some brilliant mind somewhere came up with a solution to the GPU security problem. All we had to do was only give the client a predetermined set of commands they can tell Mr. GPU to do. A quick-chat for the GPU, if you will. Then all the GPU would, or rather “could”, give back is direct video/audio playback without anything extra.

StageVideo was born, and boy, is it strict. First, you cannot create new StageVideos; they are automatically created in a set of 4 for desktop and 1 for mobile. Second, it’s not a sprite, so you cannot move it around like one. All you can do is determine the viewport by setting a rectangle object, apply a zoom, and rotate it in 90 degree intervals if given permission by the app. That’s it.

if (stage.stageVideos) {

var sv:StrageVideo = stage.stageVideos[0];

sv.attachNetStream(ns);

sv.viewBounds = new Rectangle(0, 0, 960, 480);

}

But it plays videos beautifully. Very few performance issues even with the largest of videos. And since it’s all in the background, the developer can still add a UI and additional effects in front of it for the CPU to mess with. They just cannot hide anything behind it.

The Flash stage is in front of StageVideo.

Performance was taken care of. However, we lost one big thing in the process; freedom. Because you cannot apply effects and features to StageVideo, it became no different (visually) from an HTML/Javascript video player. This wasn’t going to be enough; designers and users cherished the freedom and flexibility that Flash provided.

So now what? Now it gets complicated.

Hello World

Context3D

Alongside StageVideo, an entire framework was created to communicate with the GPU. This is very similar to HTML’s WebGL, so those of you who know the roots of WebGL will recognize a lot of the methodology.

First, a programming language was needed to let the developer pass manual commands to the GPU. While HTML uses GLSL, Flash adapted a language called AGAL. Both are very similar in layout and functionality, but it’s still safe to call them two fairly different languages.

m44 op, va0, vc0 mov v0, va1

Next, like HTML, Flash also adapted its own Context3D object to take this AGAL code and render video with it using the GPU. The Context3D takes in a set of vertex points so it knows what physical shapes to render in 3D space, and it takes in texture data to render the pixels in between. To physically display it on the screen, Stage3D was created as a base framework.

For all your 3D storage needs.

All rendering is done by the GPU. Properly coded and refined, the CPU barely has to do anything at all beyond the occasional data update if you want something more interesting than a bunch of shapes hanging around doing nothing.

Um… what was I talking about again? Oh yeah, video! So what about video? How do we get the GPU to play video?

Shapes can be rendered in 3D space and textures can be applied to them. Textures can also be changed on the fly to create dynamic images as well. So, with that in mind, why not change some very large textures super-quickly and render those just on a flat plain? Lo and behold, we’re playing video on the GPU!

Nope. Swapping out textures involves making CPU pass the data to the GPU, which actually takes a lot of time. I mean a LOT, especially for large texture files. In fact, it’s way less performant to do something like this than to simply use Video Object to render with just the CPU.

That in mind, if you wanted an animated sprite in 3D, the best way to do this is to pass the GPU a giant spritesheet and play that by changing the texture’s UV coordinates with each update.

Edit: Who’s that handsome fella?

Before you say “then make a spritesheet of the video!”, here’s why that won’t work:

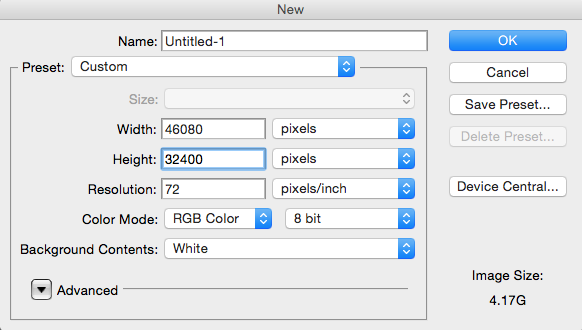

Let’s take a 2-minute 540p (960×540) video at 24FPS. Standard Youtube meme video. That’s 120 seconds times 24 frames, which is 2880 960×540 frames. In a 48×60 grid of these frames, we end up with an image that’s 46,080 x 32,400 pixels large, or 1,492,992,000 pixels. Now try to make a file that size in Photoshop and see if that will fit in your 2GB or 4GB graphics card.

Keep in mind some Youtube videos can go up to 6 hours.

Nope. Worst case; it will freeze your screen and force you to hard-restart your computer. To prevent bad programs from doing this, the context will only let you pass in textures up to 2048×2048 pixels (why would you need any larger?), and you can only upload up to 16 of them at a time. So it won’t work out anyways.

So… now what? Why even mention all this if we can’t play videos?

VideoTexture

Heard of back-doors before? … yes, your house usually has one, but another form of back-doors are openings in code where somebody can access otherwise intentionally private data. Unannounced and unknown to the client, these are a real threat to any and all programs. Not only could a program’s back-door be found and exploited by someone with malicious intent, but some malicious programmers even purposely put backdoors in their own code that they give to their clients for that exact reason. Pretty scary, eh? Make sure you can trust your developers and treat them with respect.

Well, Adobe has recently created an intentional back-door for the GPU. Don’t worry, it’s a good one. It’s name is VideoTexture. Very friendly back-door; likes earl grey tea and biscuits on the patio.

var ctx:Context3D = stage3D.context3D; var vt:VideoTexture = ctx.createVideoTexture(); vt.attachNetStream(ns);

Basically, it’s a single texture object with some extra functionality. You get it through the context, similar to how you get a StageVideo from the stage. It also takes in a reference to a playing NetStream, which passes in video data. When it’s ready to go, you apply to the context like any other texture. Just takes up one of the sixteen texture slots. No big deal. VideoTexture then takes that video data and, using its own self-made backdoor, directly feeds it right into the GPU. When successful, it dispatches an event that lets us know that its texture is ready, and then we can tell the context to render as normal.

This way, not only is the video stored safely on the hard drive, but we’re back to using NetStream to control its playback. Not just that, but it even comes with its own specialized update() call. Basically, it only updates when it’s finished rendering the next frame, so there’s no guesswork or timing involved.

vt.addEventListener(Event.TEXTURE_READY, onTextureUpdate, false, 0, true);

function onTextureUpdate(e:Event):void {

ctx.clear(0, 0, 0, 1);

ctx.drawTriangles(indexBuffer, 0, 2); // Just need a rectangle screen.

ctx.present();

}

Pretty awesome, eh?

So now we’re using the GPU to render the video, and that’s only the start. Using frameworks like Starling, or manually creating programs using AGAL, one can apply effects to the video like masking, cropping, filters, and even some new 3D effects. After all, we are using a full 3D renderer to do this. May as well take advantage of its potential.

Yo dawg, I heard you liked videos…

Mind though that VideoTexture is still new at this. There are still some limitations to watch out for. For example, it automatically sets its own hardware acceleration status based on what it thinks its environment is like. This can lead to some unreliable playback when it freaks out and switches to software mode almost randomly at times (only uses CPU). Also, it’s still in a beta stage (as of right now), and is only used for Adobe AIR, so it cannot yet be used with online Flash players. For that to happen, it may have to either pair up with WebGL, or do some pretty crazy security-based coding on their own.

Will the web Flash Player ever get VideoTexture? Probably not, since WebGL has already taken off quite spectacularly and is just as easy to work with for GPU video playback. But that’s OK, since Adobe AIR is more for making apps instead of web pages, there still is a demand in that regard.

Conclusion

Even now, with all the advancements in technology, videos will continue to get refined with higher framerates, larger resolutions, and different file types. When that happens, Adobe has a choice. They can either put in the vast amounts of effort required to keep up with the advancements. Or, they can surrender playback functionality to the other, more specialized video players on and offline.

Regardless of the outcome, they have already come a long ways in supporting and improving video playback in Flash, and thanks to the awesome power of CreateJS (no product placement intended), there’s definitely still a place for them in the widely evolving world of HTML5 and WebGL.

Many technologies have challenged the flash player in the past. SVG, Java, Silverlight, JavaScript, HTML5 … Flash has proven that it can change himself and adapt the future. This is the power of the flash format, not to be in favor of an ideology, than to select the best technology for creative ideas, to fill gabs in technology.

Also has the Open Source Media Framework(OSMF)

http://blogs.adobe.com/osmf/

VideoTexture meet ‘loss of context on rotation’ …

Too many issues to remain sane at the mo, otherwise a very useable ‘idea’.

That makes me want to see VideoTexture in regular Flash Player. WebGL is dandy and all, but I can’t view them because I’m stuck with an old browser (the new ones are too dumbed down in terms of customizability).

I remember being quite surprised when Flash MX came out. The change was radical. Back then, I’ve seen comments on how it was the second best thing to happen to Flash — the first would be 3D (which had to wait all the way until Flash Player 11).

…Wait a minute. Last I checked, VideoTexture is supported in AIR 17 and Flash Player 18. So it IS in both platforms!