Most of the products we deliver at gskinner are web-based applications. This means that one of our major goals is to have a QA process which ensures that they look and behave as expected across target devices and browsers, while having a fallback plan for those not supported. It’s a challenge in the modern web, especially when new technologies in the browser landscape are constantly emerging, while others are being refined or completely removed. This is the reason why we have a QA process that continues to evolve and expand. Here are a few ways that we currently approach it.

Use Reporting Software That Assists Everyone

One of the most vital pieces of our QA process is our bug tracking software. Issue trackers are used as a means of managing a project’s tasks from start to finish, so they must assist in keeping a project moving for everyone involved. We’ve tried a few bug trackers over the years, our most recent being JetBrains’ YouTrack since early 2012. It does a really good job of showing the state of a ticket (its progress, priority, categorization, who it’s assigned to, etc.), is mostly all edit-in-place, and comes packed with tons of features and customizations. One of our latest customizations has been to use the “Web Hooks” feature of our GIT hosting server (GitLab) to post a comment on a YouTrack ticket and automatically mark the ticket as “Fixed” whenever a GIT commit references it in its comment (ex. “Fixes projectx-35”). This removes the step of updating the ticket for the assigner, and instantly lets the reporter (who generally is a QA person) know that their ticket has been fixed and needs to go through QA. YouTrack also easily enables a tester to save custom queries for organizing tickets, allowing them to quickly get information about tickets.

Assign Multiple Roles

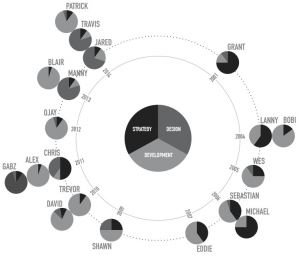

Having dedicated QA resources on all the cool projects we get is a real nice-to-have, but since the gskinner team is usually undertaking more than one project at any given time, this isn’t always possible. Because of this, we try to assign people as ad-hoc QA wherever we can. It’s even common to have someone be a developer on one project, but a QA person on another. This may seem like a negative at first, but there are a couple bonuses: each person will get better at testing their own projects, and they’ll naturally use their own non-QA skill-set to find and even diagnose problems within an application. For example, an experienced developer knows that jamming on the keyboard or chaotically clicking on buttons is an easy way to tell if an application is accounting for the myriad of things a user might unknowingly do. Likewise, an experienced designer can pick out usability issues based on their knowledge of how users expect an application to behave. This diversification ensures that a project not only meets its intended requirements, but excels in its presentation.

Use Tools to Account for the MANY Devices

There are a plethora of devices and browsers out there that should be accounted for depending on the project. It isn’t always reasonable to buy and test on every device in the market because, well, there are so many! It isn’t entirely necessary anyway because we tend to get projects that use newer technologies at gskinner. Most modern browsers have a fairly quick update process, so the inconsistencies of one browser between devices decreases day-by-day. If we can ensure an application looks and behaves as expected across all window sizes, taking into account mouse/touch/hybrid inputs, then we can be fairly certain we’ve covered most of the newer devices out there.

We use a few tools and techniques to accomplish this. Testing window sizes is probably the easiest to test just by resizing the browser to as small, big, tall or wide as it can go (check out Chris’ article on how to approach developing this). Google Chrome’s “Device Mode” is perfect for this because it gives you fine tuned control over the dimensions as well as pixel-ratios.

Ensuring an application accounts for multiple user inputs can be a little trickier though. This is where having a few real devices is useful because behaviours like multi-touch and switching between the mouse and touch are difficult to simulate. The gskinner team of just under twenty people (at this time of writing) is encouraged to use whichever operating system system suits them best. This means that we can get enough variety to test most of the browsers in Windows, OSX, Android, iOS, Firefox OS, and Windows Phones. We also have a few tablets (ex. Surface Pro, iPad, etc) and phones to fill in any gaps. Beyond that, the iOS Simulator in Xcode, Android’s Emulator, and OS virtual machines like Parallels, VMWare Fusion and VirtualBox all do a really good job of enabling you to test in current and legacy devices.

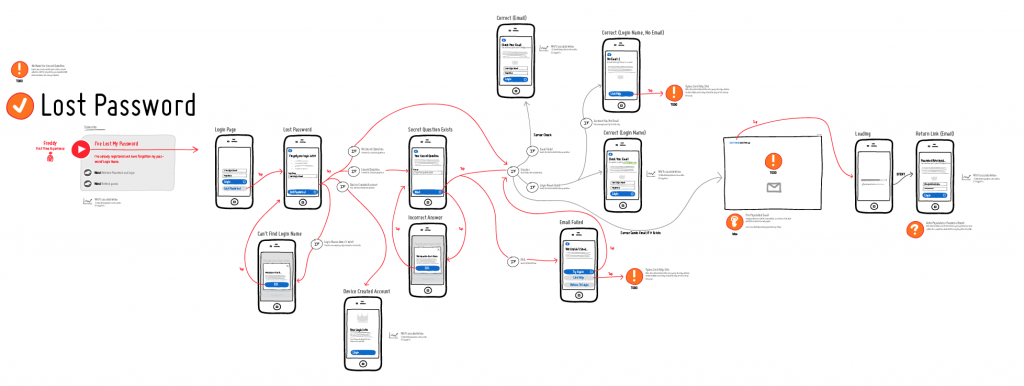

Automate User Flow Testing

The moment a feature is added to an application, like a button, menu, or dialog, a new user flow is created. This is exponential in nature and becomes harder and more redundant to test as an application grows in complexity. Not to mention that a single user flow can work fine on a specific device with a certain browser at a certain window size – the possibilities can seem endless – but break on another.

(made with Jakub Linowski’s Interactive Sketching Notation)

One approach we take is to automate as much of the QA process as possible. When it makes sense, unit tests can be used to confirm outputs and simple user interactions that are easy to calculate. Our CreateJS libraries are a prime example of this as each of them have a set of unit tests (ex. EaselJS) which get run every time a change or new build is made to ensure functionality hasn’t broken. Doing this frees up time to focus on the more complex interactions that are difficult, if not impossible, to automate.

(further reading on Automation in Your Daily Workflow)

This is where manual testing comes in. We try to employ tools which allow us to sync actions across all instances running the app. BrowserSync is one of the latest ones we’ve tried out – it syncs mouse clicks, scrolls and form actions across every device that’s running your application (among all its other awesome development features). Once we have it set up, we can literally sit back with a single tablet in hand, tap around, and watch all the other 10+ devices mimic the same actions. This system still needs improvement though, like having a way to record interactions to replay later.

Keep Moving

What’s important is that we exercise the ability to try new things to improve efficiency and quality. As each project comes and goes, we can then establish these techniques and tools as best practices to use on future ones. This not only keeps us on top of emerging technologies, but ensures we successfully deliver every project.

If your approach to QA testing has been similar or vastly different, I’d love to hear about it! What are some ways you take on the challenge of QA in the shifting landscape of web-based technologies? Do you have any suggestions for tools to use to ease user flow testing?